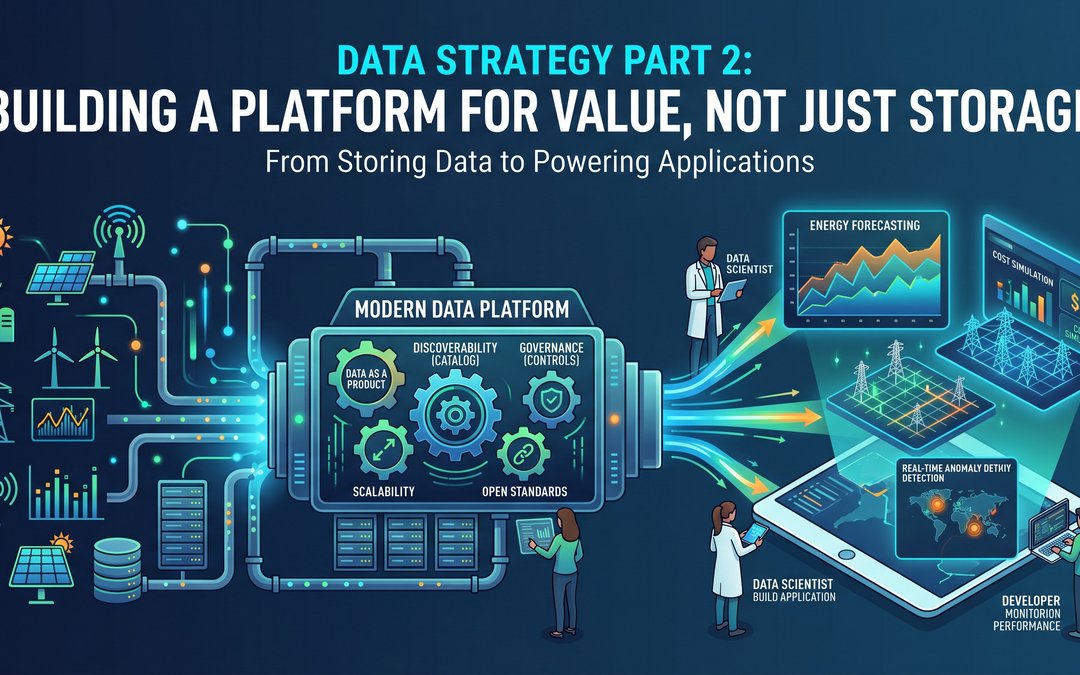

In the first post of this series, we started at the end: Data Applications. We discussed how true business value is generated when users actively utilize data to solve problems, whether that is forecasting energy consumption, simulating grid costs, or detecting anomalies in wind turbine telemetry. We also established that the key to delivering these applications efficiently is treating your data professionals as developers and prioritizing their experience.

Now, we are taking one step back. If data applications are the vehicles driving business value, the Data Platform and Management layer is the engine room.

It is easy to fall into the trap of viewing a data platform simply as a massive, centralized storage unit, a place to dump everything and figure it out later. But in our "backwards" approach, the platform's primary purpose is to serve the applications and the developers building them. It must be built for utilization, not just accumulation.

Here is how we think about building and managing a data platform that actually accelerates value generation.

Treating Data as a Product

When you shift your focus from "storing data" to "serving applications," your mindset shifts from IT infrastructure to product management. Your data platform is a product, and your internal developers, data scientists, and analysts are your customers.

If an application developer needs to build a dashboard for customer month-to-month energy costs, they shouldn't have to parse raw, undocumented JSON files or beg a central engineering team for access. The data they need should be clean, modeled, reliable, and served as a ready-to-use product.

This requires rigorous management, but the ROI is a drastic reduction in time-to-market for your most important applications.

Core Pillars of Effective Data Management

To make life easy for the developers building on top of your platform, you need to establish a few foundational capabilities.

Discoverability and Context

Data is useless if the people who need it don't know it exists or what it means. A robust data catalog is non-negotiable. Developers need a centralized way to search for datasets, understand the lineage (where the data came from), and view the metadata. If a developer is building a cost simulation, they need to easily find the sensor inventory data and immediately understand its update frequency and data types.

Governance That Enables (Not Blocks)

In the previous post, I mentioned using automated "toll gates" rather than manual gatekeepers. This is where that principle lives. Good data management includes automated quality checks, standardized access controls, and compliance masking (especially critical when handling PII alongside usage data). When governance is baked into the platform via code, developers can self-serve access securely without waiting weeks for an approval ticket.

Cost-Aware Scalability

Clean tech data, especially time-series data from IoT sensors or grid monitoring, scales exponentially. A modern data platform must separate storage and compute. This allows you to store massive amounts of historical data cost-effectively, while elastically scaling compute resources only when heavy transformation or application querying is actively happening. Giving teams visibility into the compute costs of their specific workloads also ties back to our core principle of "Ownership."

Interoperability and Open Standards

The data applications we build tomorrow might require tools we don't have today. By managing data in open table formats (like Delta Lake or Apache Iceberg), you prevent vendor lock-in. Your platform remains an open, flexible foundation that can integrate with whatever machine learning framework, BI tool, or custom application your teams need to deploy next.

Connecting Platform to DevEx

Ultimately, your platform architecture should directly reflect the "Golden Path" we discussed in Part 1. By abstracting away the complexities of infrastructure, security, and raw data processing, the platform empowers developers to focus entirely on business logic and user impact.

When your data management strategy is tight, your applications are reliable. When your platform is developer-friendly, your innovation velocity increases.

Conclusion

A well-managed data platform acts as a force multiplier for every data application your organization builds. But a platform is only as good as the fuel that feeds it. In the final post of this series, we will take one final step backward to the very beginning of the lifecycle and explore Data Ingestion, the strategies for reliably capturing and moving data from the chaotic outside world into your structured ecosystem.